»California CTC Common Standards: Standard 4

4.1. Comprehensive Assessment Plan & Annual Assessment Projects

4.1 Both the unit and its programs regularly and systematically collect, analyze, and use candidate and program completer data as well as data reflecting the effectiveness of unit operations to improve programs and their services.

The Attallah College maintains a Quality Assurance System (QAS) to inform continuous improvement based on data and evidence collected, maintained, and shared. Data inform practices and procedures and provide the basis for inquiry, additional data collection, revisions to programs, and new initiatives. Data and evidence are used to improve programs, consistency across programs, and to measure impacts of programs and completers’ impact on P-12 student learning and development.

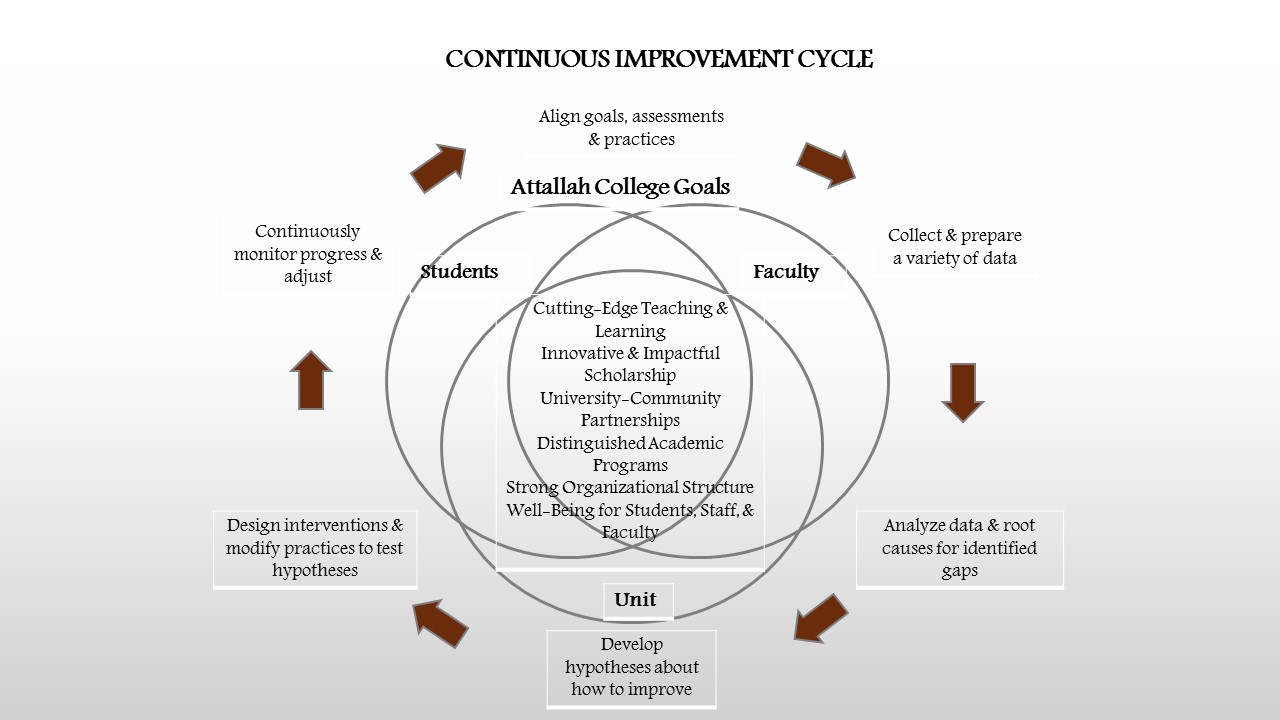

In 2016, Attallah College started implementing the current QAS aimed at informing continues improvement (see Figure 1).

Figure 1. Attallah College Continuous Improvement Cycle

The QAS is evidence-based and comprised of multiple measures that monitor candidate progression through programs, completer satisfaction and achievements, and college overall effectiveness at multiple points. The Attallah College Office of Program Assessment and Improvement (Director, Dr. Michelle Hall & Data Coordinator, Dr. Svetlana V. Levonisova) collects data from multiple sources, stores, organizes, and supplies data to the programs across six assessment areas:

- Admission

- Student Progress and Support

- Student Performance

- Clinical Experiences

- Graduate Outcomes

- Program Review and Improvement

As illustrated in Figure 1, QAS process is used for continuous review and assessment of candidates, faculty, programs, and Unit operations. The annual review process involves program coordinators along with faculty and staff (see MACI, MAT, School Counseling, and School Psychology faculty and staff profiles under Program Faculty and Staff collapsible menu) analyzing the data for needs assessment, improvement planning, collecting and reviewing formative data, assessing annual objectives, and the formal aggregation, disaggregation, and analysis of data for the next cycle. Candidate assessment data are collected and reviewed regularly to ensure appropriate progress in meeting college proficiencies, state competencies, and national standards. This process includes the review data from entrance requirements, course-based assessments described as critical tasks such as field experiences, culminating experiences, exit requirements, and alumni follow-up reports. Candidate data are also used by faculty and administration to assess the effectiveness of programs, teaching and unit operations in preparing candidates. Thus, faculty and administration develop improvement plans based in large part on candidate data.

Table 1 provides a summary of the evidence that we collect, data collection methods as well as the reporting schedules for each area of review.

For each area of review, we have articulated a series of questions that drive our data and evidence use efforts and are summarized in Table 2.

Continuous Improvement. The Attallah College is under continuous review at several levels. First, the College is developing its use of data decision making. Beginning in 2016 the college instituted Program Annual Reports that serve as opportunities for programs to review their program specific data and compare assessment cycle (term) over cycle (term) data to identify trends, challenges, and areas for improvement. These reports alongside the University required program Annual Learning Outcomes Assessment Reports (ALOAR) serve as the analysis portion of the comprehensive quality assurance system. Taken together these two pillars serve as the foundation for continuous improvement and quality assurance in the Attallah College.

4.2 Collecting Feedback and Engaging Stakeholders

4.2 The continuous improvement process includes multiple sources of data including 1) the extent to which candidates are prepared to enter professional practice; 2) the quality of the educational services provided to students during supervised practice; and 3) feedback from key stakeholders such as employers and community partners about the quality of the preparation.

Data and Evidence: Collected, Maintained, and Shared. Data are collected, monitored, stored, reported, and used by stakeholders both within and outside the College. Collecting evidence is managed and administered by the Office of Program Assessment and Improvement in coordination with programs and shared with all stakeholders. The ongoing collection of evidence in response to the California Standards through the self-study process has illuminated many strengths of our quality assurance system. Data collected and supplied to programs for these reports are based on three factors. First, we base data collection on program specific needs, for example, redesigning our End-of-Semester Surveys to gain a clearer understanding of students’ perceptions of course utility versus access to resources (see sample End-of-Semester Survey). Next, we align our data collection with the state and federal requirements for example, to ensure we are complying with candidates gaining fieldwork experience in diverse classrooms (initial completers) and schools (advanced completers) (Teacher Education Appropriate Placements 2017-18 and Counseling Appropriate Placements 2017-18). Finally, we focus these data on findings from prior cycles of data. These data provide the foundation for our program and college wide analysis.

Representative. Our enrolled candidate and graduating student numbers are small and as a result, we strive to collect data on all participants in all categories. Where we can lack representative data is when we disaggregate our data by subcategory wherein we may only have one student in that category rendering the data insignificant, yet we do consider the data as part of our triangulation process. We are currently in the process of delineating in all of our data results to identify what the sample does and does not represent to promote clarity of analysis and program improvement.

Cumulative. As mentioned above, the College has been actively focusing on systematic data collection since 2016. As a result, we have implemented the following data collection process illustrated by Table 1 that depicts multiple sources of data on program quality.

Informing Practice. Through this process we have been able to identify where programs are using data well for continuous improvement and innovation and as well as areas for continuous improvement. Through the ongoing Annual Report and ALOAR process as well as milestone reviews such as the conjoined State of California and CAEP self-study process, for example, attention to areas where more or better data and evidence are needed were exposed. Additionally, areas of inconsistencies across programs and became apparent. Further we understand improving reliability and validity of instruments as well as transparency may be improved.